产品简介

信息

Alaya NeW算力服务针对大模型基础设施实现全局加速优化:通过算法加速、编译优化、内存优化、通信加速实现训练效率提升100%、GPU利用率提升50%、推理速度提升4倍,向用户提供开箱即用的高性能模型训练服务、安全的高性能私有模型仓库、动态模型推理服务。

凭借高性能的算力服务,用户可轻松实现DeepSeek模型在云端的大规模推理部署。根据实际需求,灵活调用算力资源,畅享高效、稳定的大模型推理服务。

各模型的推荐配置

| DeepSeek版本 | 参数规模(B) | 模型大小(约) | 推荐算力配置(至少) | 推荐存储配置(至少) |

|---|---|---|---|---|

| DeepSeek-V3 | 671 | FP8: 671GB | H800*16 | 800GB |

| DeepSeek-R1 1.58 bit量化版 | 671 | FP8:131GB | H800*4 | 200GB |

| DeepSeek-R1 | 671 | FP8:671GB | H800*16 | 800GB |

| DeepSeek-R1-Distill-Qwen-1.5B | 1.5 | BF16: 3.55GB | H800*1 | 50GB |

| DeepSeek-R1-Distill-Qwen-7B | 7 | BF16: 15.23GB | H800*1 | 50GB |

| DeepSeek-R1-Distill-Qwen-8B | 8 | BF16:16.06GB | H800*1 | 50GB |

| DeepSeek-R1-Distill-Qwen-14B | 14 | BF16: 29.54GB | H800*1 | 50GB |

| DeepSeek-R1-Distill-Qwen-32B | 32 | BF16: 65.53GB | H800*1 | 100GB |

| DeepSeek-R1-Distill-Qwen-70B | 70 | BF16: 150GB | H800*2 | 200GB |

信息

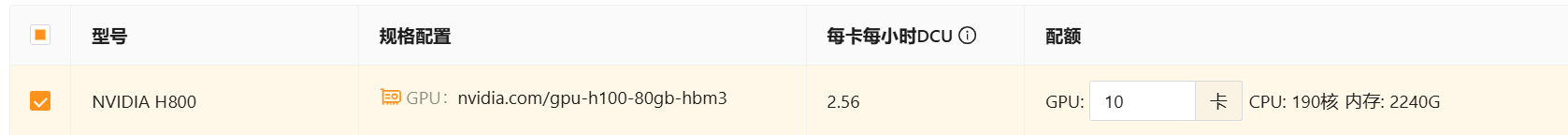

- 在弹性容器集群配置页面用户可便捷配置所需算力资源,如下图所示。

- 在弹性容器集群配置页面用户可便捷配置所需存储资源,存储类型包括文件存储、对象存储、镜像仓库等。

模型列表

目前系统支持DeepSeek-R1满血版模型(FP8无量化版) API,用户根据不同的业务需求调用对应的模型。为确保API的顺利购买与使用,建议用户提前完成企业账户注册,如果尚未注册,可点击 进行快速注册。

提示

用户登录企业账户后,进入[产品/大模型推理服务]页面,点击立即开通API服务按钮,即可快速开启大模型推理之旅,体验高效、智能的服务能力。

| DeepSeek版本 | 参数规模(B) | 模型大小(约) | 上下文长度 | 最大思维链长度(1) | 最大输出长度(2) | model |

|---|---|---|---|---|---|---|

| DeepSeek-R1 | 671 | FP8: 671GB | 64K | 32K | 8K | deepseek-r1 |

(1) 最大思维链长度:是推理完整性与计算效率的关键参数,用户需根据具体任务和模型能力进行调整。

(2)最大输出长度:指模型生成的回复文本的最大字符数或令牌(Tokens)数量。

用户获取API Key后可直接调用相应的模型进行任务执行。例如:使用如下方式在本地调用满血版的deepseek-r1。

- cURL

- Python

- GO

- JavaScript(react为例)

- Java

curl --location 'https://deepseek.alayanew.com/v1/chat/completions' \

--header 'Content-Type: application/json' \

--header 'Authorization: Bearer <your_access_token>' \

--data '

{

"stream": false,

"messages": [

{

"role": "user",

"content": "Please generate an essay"

}

],

"model": "deepseek-r1"

}

'

from openai import OpenAI

client = OpenAI(api_key="<your_access_token>", base_url="https://deepseek.alayanew.com/v1")

response = client.chat.completions.create(

model="deepseek-r1",

messages=[

{"role": "system", "content": "You are a helpful assistant"},

{"role": "user", "content": "Hello"},

],

max_tokens=1024,

temperature=0.7,

stream=False

)

print(response.choices[0].message.content)

package main

import (

"fmt"

"io/ioutil"

"net/http"

"strings"

)

func main() {

url := "https://deepseek.alayanew.com/v1/chat/completions"

method := "POST"

payload := strings.NewReader(`{

"messages": [

{

"content": "You are a helpful assistant",

"role": "system"

},

{

"content": "Hi",

"role": "user"

}

],

"model": "deepseek-r1",

"frequency_penalty": 0,

"max_tokens": 2048,

"presence_penalty": 0,

"response_format": {

"type": "text"

},

"stop": null,

"stream": false,

"stream_options": null,

"temperature": 1,

"top_p": 1,

"tools": null,

"tool_choice": "none",

"logprobs": false,

"top_logprobs": null

}`)

client := &http.Client{}

req, err := http.NewRequest(method, url, payload)

if err != nil {

fmt.Println(err)

return

}

req.Header.Add("Content-Type", "application/json")

req.Header.Add("Accept", "application/json")

req.Header.Add("Authorization", "Bearer <your_access_token>")

res, err := client.Do(req)

if err != nil {

fmt.Println(err)

return

}

defer res.Body.Close()

body, err := ioutil.ReadAll(res.Body)

if err != nil {

fmt.Println(err)

return

}

fmt.Println(string(body))

}

- axios

- fetch

const axios = require('axios');

let data = JSON.stringify({

"messages": [

{

"content": "You are a helpful assistant",

"role": "system"

},

{

"content": "Hi",

"role": "user"

}

],

"model": "deepseek-r1",//模型选择deepseek-r1 / deepseek-r1-lite

"frequency_penalty": 0,

"max_tokens": 2048,

"response_format": {

"type": "text"

},

"stop": null,

"stream": true,//使用流式传输

"temperature": 1,

"logprobs": false,

});

let config = {

method: 'post',

maxBodyLength: Infinity,

url: 'https://deepseek.alayanew.com/v1/chat/completions',//前端请注意跨域请求问题

headers: {

'Content-Type': 'application/json',

'Accept': 'application/json',

'Authorization': 'Bearer <your_access_token>'

},

data: data

};

axios(config)

.then((response) => {

console.log("返回信息", JSON.stringify(response.data));

})

.catch((error) => {

console.log("错误信息", error);

});

import React,{useState} from 'react'

const [requestData,setRequestData]=useState('')

let data = JSON.stringify({

"messages": [

{

"content": "You are a helpful assistant",

"role": "system"

},

{

"content": "Hi",

"role": "user"

}

],

"model": "deepseek-r1",//模型选择deepseek-r1 / deepseek-r1-lite

"frequency_penalty": 0,

"max_tokens": 2048,

"response_format": {

"type": "text"

},

"stop": null,

"stream": true,//可以自由选择是否为流式传输

"temperature": 1,

"logprobs": false,

});

try {

const response = await fetch('https://deepseek.alayanew.com/v1/chat/completions', {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': 'Bearer <your_access_token>',//请在此处修改为你的API Key

},

body: JSON.stringify(data),

});

// 处理流式响应

const reader = response.body.getReader();

const decoder = new TextDecoder('utf-8');

let partialData = '';

const dataStream = data.stream

while (true) {

const { done, value } = await reader.read();

if (done) {

break;

}

partialData += decoder.decode(value, { stream: true });

if (dataStream) {//判断是否为流式响应

const lines = partialData.split('\n');

for (let line of lines) {

if (line.startsWith('data: ')) {

line = line.substring(6);

if (line === '[DONE]') {

break; // 结束流式响应

}

try {

const jsonData = JSON.parse(line);

const content = jsonData.choices[0]?.delta?.content;

if (content) {

console.log(content)//可以打印在控制台

setRequestData(prevData => prevData + content);//将消息存储在state中

}

} catch (e) {

console.error('Error parsing JSON:', e);

}

}

}

// 清空 partialData 以便处理下一个分块

partialData = '';

} else {

const jsonData = JSON.parse(partialData)

const content = jsonData.choices[0]?.message?.content ?? null;

if (content !== null) {

console.log(content);//可以打印在控制台

setRequestData(content);//将消息存储在state中

}

}

}

} catch (error) {

console.error('Final error:', error);

}

import okhttp3.*;

import java.io.IOException;

import java.util.concurrent.TimeUnit;

public class Test {

private static final String OPENAI_API_KEY = "<your_access_token>";

private static final String OPENAI_API_BASE = "https://deepseek.alayanew.com/v1/chat/completions";

public static void main(String[] args) {

OkHttpClient client = new OkHttpClient().newBuilder()

.connectTimeout(10, TimeUnit.SECONDS)

.readTimeout(10,TimeUnit.MINUTES)

.writeTimeout(10,TimeUnit.MINUTES)

.build();

MediaType mediaType = MediaType.parse("application/json");

RequestBody body = RequestBody.create(mediaType, "{\n \"messages\": [\n {\n \"content\": \"You are a helpful assistant\",\n \"role\": \"system\"\n },\n {\n \"content\": \"你是一个特级中学语文老师,特别擅长唐诗的研究,会高度模仿李白、杜甫的作诗风格,还特别能接受别人的意见。\",\n \"role\": \"user\"\n }\n ],\n \"model\": \"deepseek-r1\",\n \"frequency_penalty\": 0,\n \"max_tokens\": 2048,\n \"presence_penalty\": 0,\n \"response_format\": {\n \"type\": \"text\"\n },\n \"stop\": null,\n \"stream\": false,\n \"stream_options\": null,\n \"temperature\": 1,\n \"top_p\": 1,\n \"tools\": null,\n \"tool_choice\": \"none\",\n \"logprobs\": false,\n \"top_logprobs\": null\n}");

Request request = new Request.Builder()

.url(OPENAI_API_BASE)

.method("POST", body)

.addHeader("Content-Type", "application/json")

.addHeader("Accept", "application/json")

.addHeader("Authorization", "Bearer " + OPENAI_API_KEY)

.build();

try {

Response response = client.newCall(request).execute();

System.out.println(response.body().string());

}catch (IOException e){

e.printStackTrace();

}

}

}

功能使用

推理服务通过API调用大模型,基本流程如下所示。

计费说明

平台提供专属资源部署模型,详情用户可查看计费说明。